It’s another photograph of the penis [transplanted from the owner’s middle finger], now with a rustic ceramic pitcher hanging off of it. Exactly as you or I could put a hand out, palm up, and hang a pitcher off our middle finger. The pitcher is hand-painted, white with a red and green floral pattern. I’m picturing the man and his partner again, this time having lunch in the kitchen. A little iced tea, my love?

Eventually the day arrives when no further breach is possible. Mr. Baron, wrote his doctor, Richard Martland, “was now informed, the only chance for saving his life was by making an artificial anus . . . the inconveniences resulting from such operation, were candidly pointed out to him.” Mrs. White, on the occasion of her twelfth day without a bowel movement, is presented with the same proposition. Both patients consented. Or rather, as Pring put it, “did not violently object.” And so it went. “An opening was now made . . . and instantly a large quantity of liquid feces and wind escaped,” Martland wrote. Both doctors were impressed by the force with which the matter was expelled, with Pring taking additional note of the “considerable distance” traveled. – There was no mention of a fan in the vicinity.

This book certainly lends new meaning to the expression “an heir and a spare.”

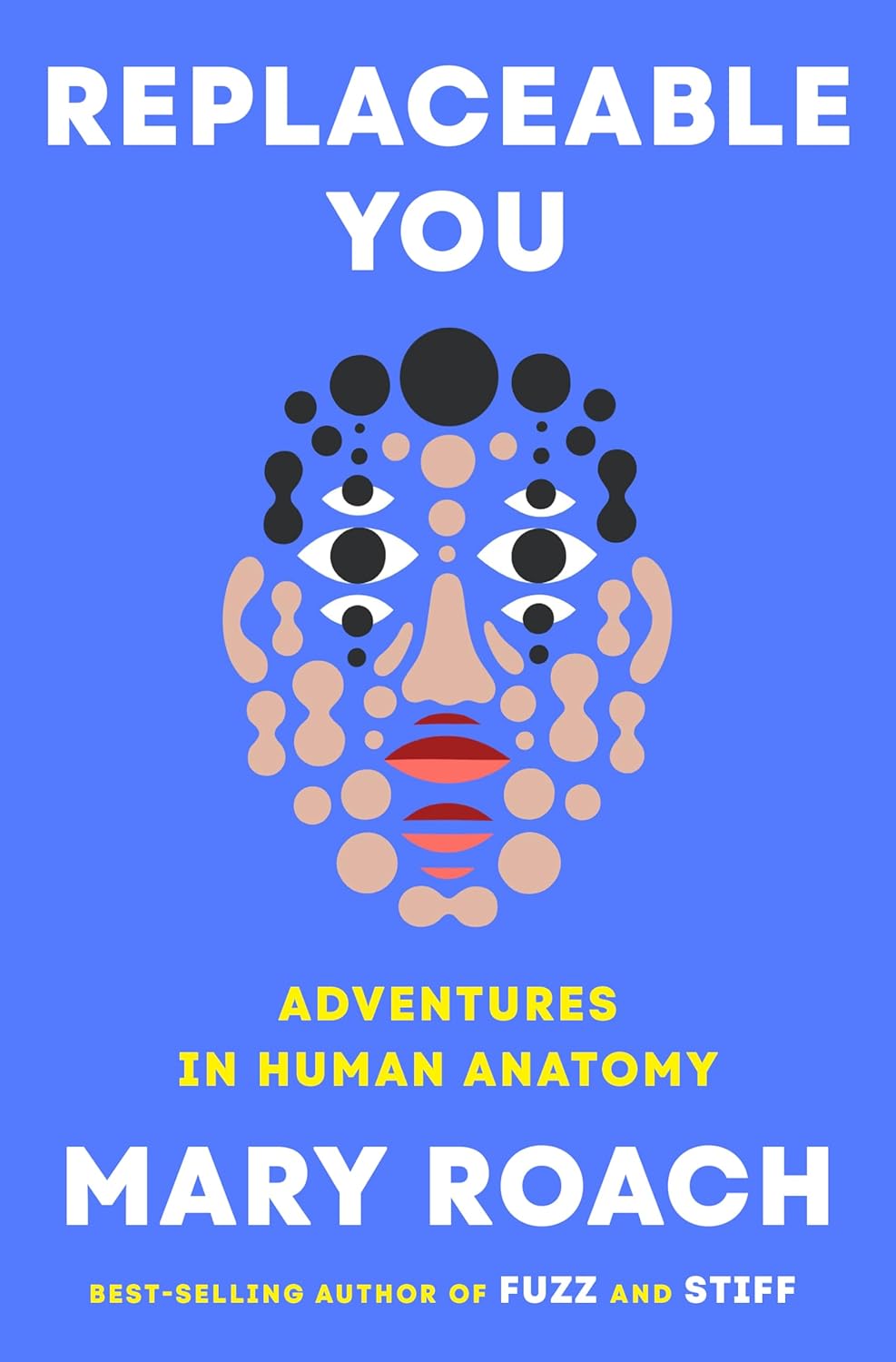

I imagine many of us, certainly folks of my demographic, have had the experience of having parts replaced, probably because of need, but for some because of choice. I know I would happily have my begrudging spine replaced if I had the chance. And there are certainly a few improvements I could use that would be competing for second place on that list. Thus, my interest in Mary Roach’s latest, Replaceable You. (My ex can attest to the truth of the title’s applicability to the entire me.) Of course, I would read anything Mary Roach has written. She is one of my all-time favorite authors. And just in case her work is new to you, she combines a hard-reporting look at a particular scientific realm with a sense of humor that will leave you gasping for breath. Complete swallowing any ingested liquids before reading her work, as they may come blasting out your nose and mouth before you can get a grip. You have been warned.

Mary Roach – image from Harvard Bookstore – shot by Jen Siska

Roach has made a career of writing about the human body, from conception (Boink) to grave (Stiff), and beyond (Spook), with travels through the alimentary canal (Gulp), the body’s reaction to space flight (Packing for Mars), to war (Grunt) and even to strangeness having to do with our interactions with animals (Fuzz). This time around, she covers a range of body parts subject to replacing. She sniffs out the history of nose jobs back to the 1500s, and lets us in on why they were in such high demand back then. She brings home the bacon by filling us in on the status of pig research (on, not by) for the purpose of developing organs that can be transplanted into humans, a subject close to my heart.

A formal “miniature swine development” project got underway in 1949, a collaboration between two Minnesota powerhouses, the Mayo Foundation (research arm of the Mayo Clinic) and the Hormel Institute (research arm of pork).

There are sections on the replacement, or enhancement, of sexual organs. One of the opening quotes of this review notes a particular form of actual, not kidding, replacement for a male member. Female anatomy comes in for a bit of consideration as well.

Dow Corning…made the first silicone breast implant, circa 1961, this one at the urging of Texas plastic surgeon Thomas Cronin. Cronin had been “inspired by the look and feel of a bag of blood.” It is hard for me not to picture the scene: Cronin standing around the OR, idly gazing at a bag hanging on a transfusion pole and turning to a colleague: Hey. Does that remind you of something? Cronin contacted Dow, and his resident, Frank Gerow, implanted some prototypes into dogs. The team were “excited” by the outcome—and again, stop me from picturing this.

Continuing downstairs…

Who looks at the human digestive tract and thinks, Moist, tubular, stretchy . . . Might that make a reasonable vagina?

Some, apparently. The derriere comes in for a long look as well. Part of this is a consideration of the changing fashions in tush shape, stretching from sane to anime.

I have made it (well in) to septuagenarian with what is probably an unremarkable range of replacement parts. My right arm was the recipient of two metal plates and a bone graft from my hip after a rather nasty industrial accident in 1970. The plates were removed once they had done their job of helping my bones knit, a few years later. The bone graft helped heal another fracture, and was eventually absorbed back into my skeleton.

Roach tells of prosthetic limbs, and the surprising news that some people prefer them to the original. In fact, the initial inspiration for this book came from a reader who had suggested that Mary take on the exciting world of football referees. Understandably, that failed to score, but it turned out that the woman, who has spina bifida, had a problem with her foot. It had, as a result of her other difficulties, become less than useless. She wanted it removed so she could get a prosthetic, but few surgeons would consider electively removing it. Mary joined her at the Amputation Coalition National Conference, and the game was…um…afoot.

It will come as no shock that using one’s own body bits as a source of transplantation offers the huge advantage of sparing you the need for a lifetime of immunosuppressive drugs. The body’s Studio 54 security guard can take a quick look and wave the new part in as the right sort. It worked for me a second time.

Other recent personal additions were the product of open-heart surgery, three stents and an aortic valve replacement. The stents were made up of material extracted from my right leg, and the valve was contributed by a member of our porcine community. I have yet to experience any desire to go digging for truffles in nearby woodlands.

Roach takes joy in comparing the techniques and tools of hip replacement surgery to woodworking, noting that while patients could actually be conscious during the surgery, the clanging, sawing machinery noise would be so disturbing that it is deemed preferable for patients to remain unconscious for the duration. She says that “Hip replacement has the visual drama of a visit to a Chevron station.”

Sadly, I was born with a mouthful of (well, maybe not born with, which would be weird, but ultimately host to a couple of sets, baby and adult) soft teeth (my dentist’s words). This led, over time, to a need for fillings, caps, bridges and dentures.

.

If you count up all the teeth that are removed and replaced with implants or dentures, I bet they would lead the pack in sheer numbers of replacement bits. Mary leads us on a walk through dentures in history, the up and down sides, as well as the benefits of not using them at all. Yes, Geoge Washington makes an appearance. You will find the section on spring-loaded replacements both fascinating and alarming, and concerning as well is the fact that so many people have elected to remove healthy teeth in order to install dentures.

Replacing hair is big business these days. But not just up top. Pubic hair is also replaceable. Not only can the short and crinkly be replaced, with hair from the head, but the opposite can be done as well. Of course this can present some grooming challenges, as pubic and head hair grow differently and behave inconsistently when treated with grooming products. Mary subjected herself to a bit of hair harvesting in her travels.

My donor site is smeared with bacitracin, but no gauze is taped in place, because my hair would get stuck. On the flight home, I will feel the back of my head start to ooze. Do you fly Southwest? Don’t sit in 11B.

She delves into the growth industry of 3D printing of bodily materials. It turns out that it is not so simple as it might seem. Each muscle and organ has particular abilities and behaviors that are very difficult to replicate, involving twisting, contracting, and reacting to incoming messaging from the brain. The Star Trek replicator might do a great job of producing “Earl Grey, hot,” but fabricating people-pieces is proving a much more complex undertaking than sci-fi writers imagined.

Eye lenses are being replaced at increasing rates. There are even many people who, as with tooth replacement noted above, are having their natural lenses replaced in order to improve their vision.

I have worn glasses since I was eight years old, for distance. The only issue I had with them was losing track of them. But in the last few years, it became obvious that there was a buildup of material on the left lens of my eye (not just the product of inattentive eyeglass wiping) that made night driving particularly challenging. Oncoming headlights, even non-bright ones, spread a glare across my field of view that was problematic. I had cataract surgery earlier this year (2025), left eye only. And it worked like a squeegee on a filthy window. The headlights are still miserable, particularly when people insist on using their brights, and when many newer cars use lights that are brighter than the prior generations of illumination, but the improvement in my vision was immediate.

She writes about the processes involved in recovering bio-materials from the recently dead, the potential for banking our own cells as a source for future replacement materials, a machine for offloading heart and respiration work from the body temporarily, and introducing us to the wonderful world of ostomies, noted up top.

Mary may write about science, but she is not, per se, a scientist. Thus, she can offer us an every-person view into the subjects she investigates. She reacts how we might react when faced with the same discoveries. She brings the oh, wow!, the joy-of-discovery moments to life for readers, and thus makes what she has learned stick for us more than it would from any dry textbook. If she leaves you in stitches, that might just be a part of your replacement work. If you burst with laughter, bust a gut, if you crack up or your laughter is side-splitting, that may be why you needed the work in the first place. Of course, if you laugh your head off, there is probably no short-term solution to that. Replaceable You is the real thing, as is Mary Roach. She is one of a kind. Accept no substitute. She is irreplaceable.

Nana takes a seat at Kuzanov’s desk and starts opening files on his computer desktop. After a few false starts, she locates a folder of photographs documenting a finger transplant performed on a cancer patient. Most of the man’s penis had been amputated, leaving him unable to do two of the things most men like to do with their penis: penetrate and pee while standing. The surgery would restore both. – She missed one.

Review posted – 09/19/25

Publication date – 09/16/25

I received an ARE of Replaceable You from W.W. Norton & Company in return for a fair review. Thanks, folks, and thanks to NetGalley for facilitating. And could you check to see if anyone left any spare spines lying around.

This review is cross-posted on Goodreads. Stop by and say Hi!

===================================EXTRA STUFF

Links to Roach’s personal, Instagram and Twitter pages

Profile – from her site

I grew up in a small house in Etna, New Hampshire. My neighbors taught me how to drive a Skidoo and shoot a rifle, though I never made much use of these skills. I graduated from Wesleyan in 1981 and drove out to San Francisco with some friends. I spent a couple years working as a freelance copy editor before landing a half-time PR job at the SF Zoo. On the days when I wasn’t there in my little cubicle in the trailer behind Gorilla World, I freelanced articles for the Sunday magazine of the local newspaper. One by one, my editors would move on to bigger publications and take me along with them. In the late 1990s, magazines began to sputter out and travel budgets evaporated, and so I switched to books.

People call me a science writer, though I don’t have a science degree and sometimes have to fake my way through interviews with experts I can’t understand.

I have no hobbies. I mostly just work on my books and hang out with my family and friends. I enjoy bird-watching (though the hours don’t agree with me), hiking, backpacking, overseas supermarkets, Scrabble, mangoes, and that late-night “Animal Planet” show about horrific animals such as the parasitic worm that attaches itself to fishes’ eyeballs but makes up for it by leading the fish around.

Interviews

—–Stuff to Blow Your Mind on iHeart – Replaceable You, with Mary Roach by Robert Lamb – audio (36 minutes) with available transcript

—–Peculiar Book Club – MARY ROACH is Un-Replaceable! – video – 1:10:03 – from 5:00

—–NPR – From heart to skin to hair, ‘Replaceable You’ dives into the science of transplant with Brandy Shillace

—–Association of Health Care Journalists – Mary Roach calls herself ‘the gateway drug to science’ by Lesley McClurg

Other Mary Roach books we have enjoyed

—–2021 – Fuzz: When Nature Breaks the Law

—–2016 – Grunt: The Curious Science of Humans at War

—–2013 – Gulp

—–2010 – Packing for Mars

—–2006 – Spook Six Feet Over – recently renamed

—–2004 – Stiff

Items of Interest

—–New York Times – 10 Icky Things Mary Roach Has (Unfortunately) Brought to My Attention – by Sadie Stein – from sundry MR writings, in case you have not laughed enough from reading her latest

—–Bauman Medical – What is a Pubic Hair Transplant?

—–Youtube – A particularly disturbing transplantation scene from the 1973 film Oh Lucky Man

Song

—–Beyonce – Irreplaceable